All posts by nouyang

Protected: Making gelsights the easy way

Colored python debugging output / errors / tracebacks (in bash)

The illegibility of python tracebacks has bugged me for a while, so I finally got around to adding color to my terminal (bash) output.

Basically, the “lightest” way I found is to redirect the python stdout output through a sed script that injects some color codes before spitting the output back to your terminal (and then to your eyes).

My system is Ubuntu 18.04, in bash. Not sure how it’d translate over to Mac or Windows — it relies on these annoying color escape codes. (according to internet, should work on OSX and Windows terminals)

How

Add to your ~/.bashrc :

norm="$(printf '\033[0m')" #returns to "normal"

bold="$(printf '\033[0;1m')" #set bold

red="$(printf '\033[0;31m')" #set red

boldyellowonblue="$(printf '\033[0;1;33;44m')"

boldyellow="$(printf '\033[0;1;33m')"

boldred="$(printf '\033[0;1;31m')" #set bold, and set red.

copython() {

python $@ 2>&1 | sed -e "s/Traceback/${boldyellowonblue}&${norm}/g" \

-e "s/File \".*\.py\".*$/${boldyellow}&${norm}/g" \

-e "s/\, line [[:digit:]]\+/${boldred}&${norm}/g"

}

Then to use it, run

$ source ~/.bashrc # reload file without restarting terminal $ copython myprogram.py # copython = colored python

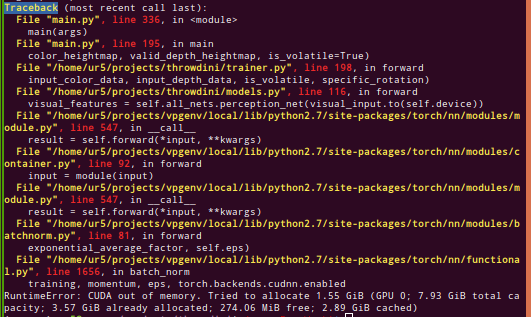

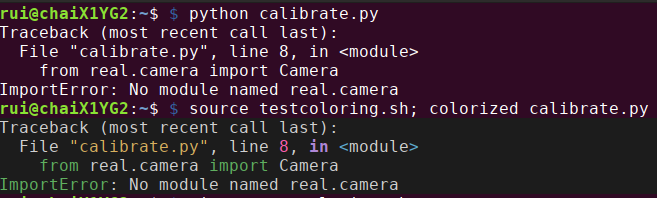

Originally, the program outputs:

With

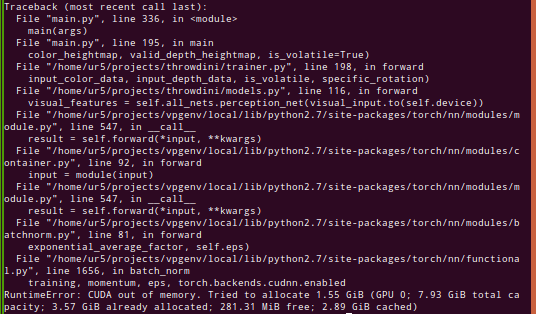

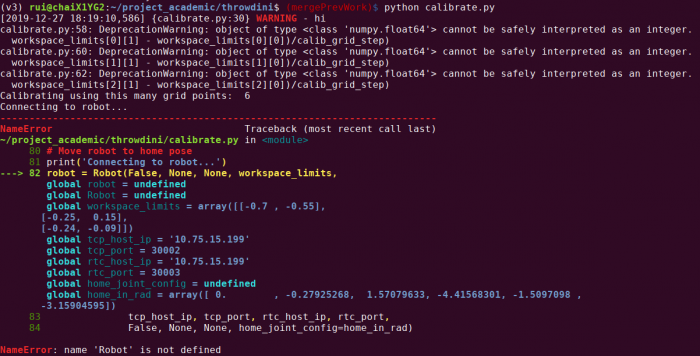

With `copython`it now outputs

Based on:

https://stackoverflow.com/questions/20909942/color-linux-command-output/20910449#20910449

Mostly, I just tacked on more sed regex’s, and changed the colors around a bit.

The result is actually kinda inverted from a UX standpoint — probably want the actual code highlighted more than the file / line number — but we’re reaching the limits of my patience with regex’s here.

This is fairly lightweight but definitely still tacks on some time! (My regex’s are not very efficient I’m sure). Fortunately easy to switch between `copython` and `python` if concerned 🙂

Explanation: ANSI Color Codes

Note that \033[ marks the beginning of a code, which helped me understand a bit better what is going on.

0m # normal 0;1m # bold 0;1;33;44m # bold yellow on blue 0;1;33m # bold yellow 0;1;31m # bold red

So I guess the first 1 indicates bold, then the second 33 indicates foreground color, and the third 44 indicates background color. Something like that.

I think there’s eight main possible colors, going from 30 to 37 for foreground, and 40 to 47 for background colors.

# Foreground Regular Colors 30m' # Black 31m' # Red 32m' # Green 33m' # Yellow 34m' # Blue 35m' # Purple 36m' # Cyan 37m' # White # Background Regular Colors 40m' # Black 41m' # Red 42m' # Green 43m' # Yellow 44m' # Blue 45m' # Purple 46m' # Cyan 47m' # White

Alternatives: Vimcat

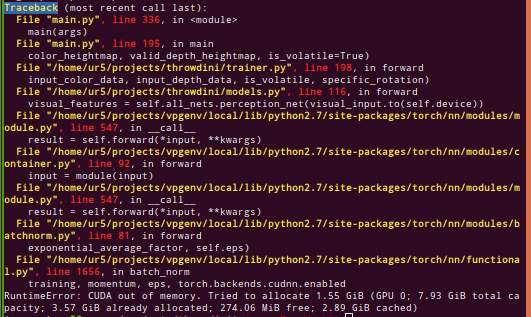

Pipe it through vim! Specifically my step-by-step answer is at the bottom. Unfortunately, this both (a) requires installing things and (b) is slow. See before and after in this image:

CON: It’s noticeably slow.

Alternatives: IPython

import sys from IPython.core import ultratb sys.excepthook = ultratb.FormattedTB(mode='Verbose', color_scheme='Linux', call_pdb=False)

CON: Have to edit every single python file! Also incredibly slow.

[END]

Notes to self

next post todo: cover coloring output of python logger.py module

https://stackoverflow.com/questions/384076/how-can-i-color-python-logging-output

specifically this

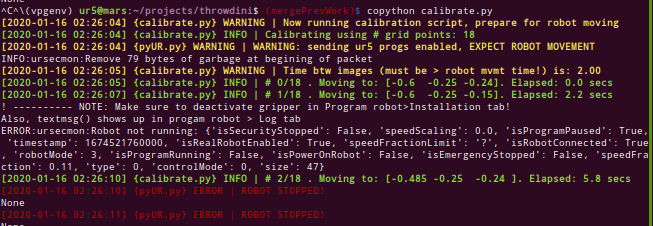

sneak preview:

I still don’t fully understand the nesting of loggers — you can see ‘white’ output from some other logger being called by the main file. (also a few print() messages I haven’t converted yet).

linkback to hexblog draft of this post